Responsible AI

Responsible AI requires Regulation - Govern AI for Humanity

The developer conference season for 2025 ended before summer, at least Microsoft Build did which is what I pay attention to mostly myself, and the buzzword winner of 2025 was "Agentic". Everything should be enabled for multi-modal AI agent interactions and the agents should be made to interact with each other and all existing legacy systems and tools. Now, half a year later, the agentification is at full speed with MCP as de-facto standard and debate on if AI Skills is what comes after AI Agents, and I must admit that even more use cases are now relevant to benefit from AI because of it. Particularly so within the realm of coding, but all knowledge-based work is easier to realize will become agentic. The tremendous potential of the technology breakthroughs of recent years are pointless to argue against.

What I will argue against, however, is on the manner of how irresponsible and unethical these AI breakthroughs have come to be and are currently being rolled out and being used. My top concerns:

- The frontier models of today are built upon theft. I will not debate my stance on this. The fact that multi-modal AI models can generate text in the style of known authors, replicate voices, generate images and videos in the style of artists and so forth, is evidence enough. The huge data sets used to train the models largely consists of data that the content creators have never consented to being used for such purpose nor have they been compensated. If you steal and earn money on what you have stolen you go to jail. In a few countries you will even risk getting mutilated for doing so.

- Energy. The compute and storage needed for training and using AI models is huge, with enormous electricity needs, water for cooling and natural resources in general for producing data centers and the electronics to fill them. The hyperscalers might claim they run data centers on renewable energy, but they share power grid with the rest of us so when they use it all the hospitals, fire and police stations, and peoples homes e.t.c., end up having to use other power sources. Multiple new models, with similar capabilities, are also being trained in paralell, with only some getting successfully deployed, resulting in enormous energy waste. On top of this, the output from using AI often does not energy-wise scale well relative to its convenience. Think about all the prompt tweaking and trial and error runs you have done to get the generated output to match your needs (make the cat wear a hat, make the cat cuter, make the cat..) and then multiply with the billion other users. The convenience of using natural language on a artificial system inherently result in it being used instead of a more energy efficient alternative. Example: You use a LLM to do analytics on a large database and it outputs a SQL query for you to run to get the information you need. Running the SQL query use a fraction of energy compared to what the LLM required to generate it, but you do not store the SQL query for next time you need the information because it is so convenient to simply ask the same thing next time.

- Frontier AI models for general use are not safe enough for deployment to the public. Had they been a medicine drug or food they would not be allowed for humans to use. This report and other has the details, but in the end it boils down to how astoundingly unregulated this technology still is. While they are the best mansplainers (qualified guessing is what they do..) the planet has ever seen and can be very useful, they are unpredictable and not really possible to control by certainty. New AI Evals are being created all the time and fine-tuning models to pass them done constantly, but everytime weights and biases are tweaked by fine-tuning all old evaluations are also affected. The general consensus seems to be that 100% safety is not achievable.

- Economy and social transformation. It does not really matter if true AGI and ASI becomes a reality or not, what is apparent is that any task a human can do based on existing knowledge will eventually be possible to replicate by machine. At least that is the goal of all the frontier AI model makers. The less digital the task is the longer it will take, but combined with robotics any such task is in scope. The current financial systems are not really designed for an abundance of non-consuming and non-taxpaying workers and will short term greatly reward some (successful implementors and existing money to invest) but eventually collapse due to extreme inequalities with worst case scenarios involving revolution and more wars.

Listing these concerns, and there are more than my 4 top of mind above, probably makes me sound like a pessimistic opponent of all things AI. I am not. I do think we might finally be on the verge of the possibility of achieving abundance using technology, although the "abundance" phrase being a bit worn-out as it has been echoed as an excuse by so many tech company executives in recent years. We are just not on the abundance path for everyone at the moment.

What should we do then to be on the abundance for everyone path? Short answer, standardize, open-source, regulate, innovate, govern and vote accordingly. Not necessarily in that order. Longer answer, read along.

Last year the UN. Advisory Body on Artificial Intelligence released the report Governing AI for humanity which concludes 7 Calls for Action. As far as I can tell none of those actions has been acted upon. In all fairness, the world being in general turmoil has made it hard for the UN. to act as a global governing body for much about anything in quite some time now. Especially so in 2025..

With that said, there have been some attempts at regulation on isolated markets, with the latest relevant in the USA being SB 53 in California and the RAISE Act in New York pending Governor signature. The more prominent one, if you ask me, is however the EU AI Act that is the most comprehensive regulatory framework of date known to me. The main problem with all of these is however that they lack ambition on the bigger picture and mostly focus on safety issues and risk reduction. Solving only 1 of the main concerns (3) is not enough. Unfortunately the existing regulations are not even going to do that as they are weak in penalizing non-compliance and have been watered down by lobbying efforts.

Noteworthy in all of this is that the makers of the frontier AI models of today themselves truly believe that AGI and ASI is on the horizon and that their advent demands reshaping society and new economic systems. Do not take my word for it and take a listen on the latest DeepMind podcasts with Hannah Fry where Shane Legg, co-founder of DeepMind, states the need for reshaping society and Demis Hassabis, co-founder and CEO of DeepMind, claims that new economic systems are needed.

Alright, so far I have written nothing more than something a kin of a vague problem definition. Is that enough to start drawing out solutions? Perhaps it is, but I would like to raise the ambition level a notch or two and envision what future we want and intertwine governing AI into being part of working towards such goals. As of late I have been thinking about the 1998 movies Armageddon and Deep Impact and realized that is sort of the future I want. Not that I want a mass exctinction level asteroid to hit our planet, of course, but that the single most feared risk to humankind and our planet that dwindles all other threats is something not being a product of human activities.

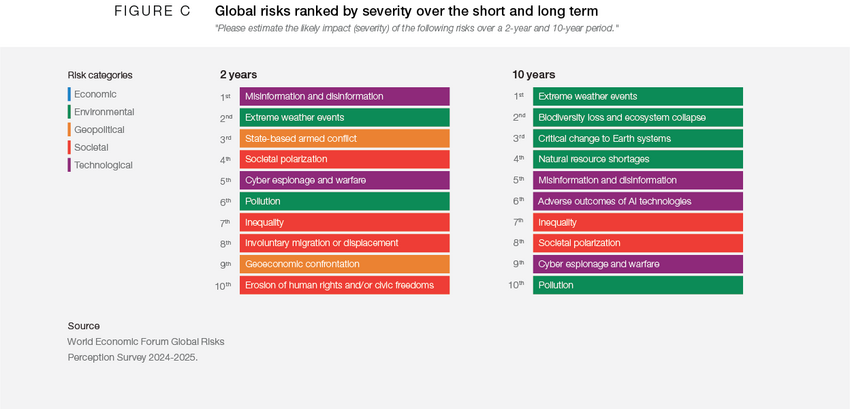

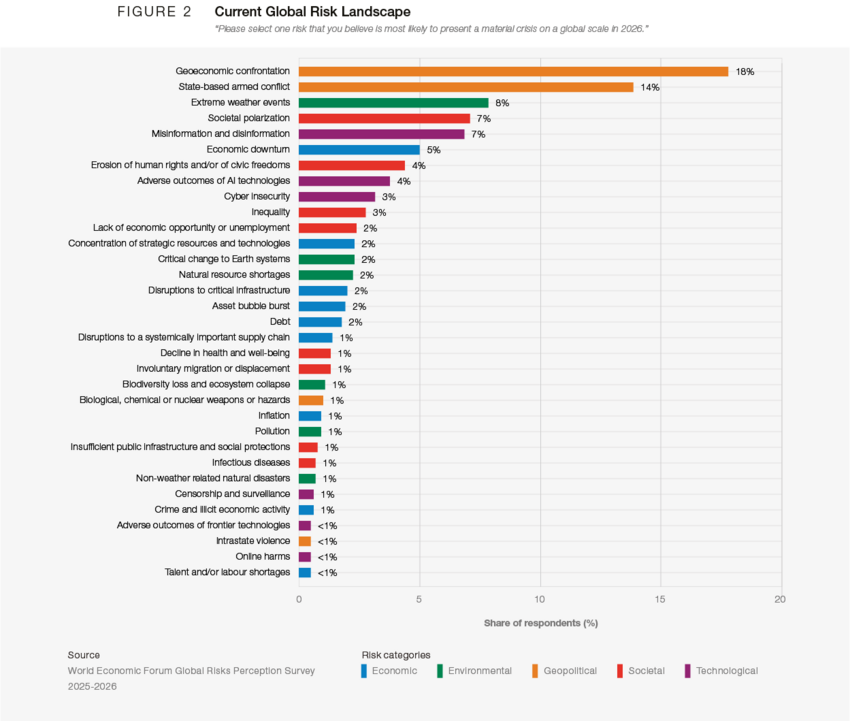

The World Economic Forum publish a report, the Global Risks Report, on a yearly basis that lists and ranks global risks by over 900 experts. Needless to say, that a yet undetected asteroid of mass exctinction size is going to hit the planet did not make it into the Global Risks Report 2025, nor do I expect it will be in the 2026 edition when it comes out early next year. What is in the report is however several risks that directly or indirectly involve AI and I expect many of those to be ranked even higher in the 2026 edition.

Before I published this post the 2026 report was released. This time based on input from 1300 experts:

So, if we continuosly prioritize our efforts to mitigate or even eliminate the top risks from the Global Risks Report we will eventually get a future where they rank the risk I want to see at the top the highest, right? Technically that might be correct, but I live in a relatively priveleged bubble and my hopes for the future is unwise to assume are relevant for everyone else.

Luckily, much more knowledgeable people on what the world and humankind needs than me managed in 2015 to get all member states of the United Nations to agree on sustainable goals for a better future by 2030. 4 more years to achieve the Sustainable Development Goals 2030 that the global community has commited to. The SDG (Sustainable Development Goals 2030) recognize that action in one area will affect outcomes in others, and that development must balance social, economic and environmental sustainability. Countries have committed to prioritize progress for those who're furthest behind.

If we acknowledge that current state of artificial intelligence rollout is not in line with SDG commitments and even fuelling some of the risks outlined by the World Economic Forum Global Risks report, then it should in principle be easy to accept the need for regulation.

High Level Mechanisms enabling Governing AI

The problem with regulating a technology that evolves faster than anyone before it, new models and versions are released almost daily at the moment, is that no standard envisioned remain valid long enough for it to be useful as basis for regulation. That is the main thing needed to be changed. We need to implement a technology bridge shared by most AI uses that allows regulation to be effective. Without it politicians does not seem to fathom how to regulate.

There are plenty suggestions out there on regulations needed to avoid uncontrollable AGI is developed. The best one I have found is that of https://keepthefuturehuman.ai/

Summerized by:

- Compute oversight - Standardized tracking and verification of AI computational power usage. Model registration requirement based on FLOP. 1025 FLOP per model training and 1019 FLOP per second for model inference operation. Cryptographic licensing built into hardware (GPU/CPU/NPU).

- Compute caps - Hard limits on computational power for AI systems, enforced through law and hardware. Ban model training of above 1027 FLOP and running models using over 1020 FLOP per second.

- Enhanced liability - Strict legal responsibility for developers of highly autonomous, general, and capable AI. Make company executives personally legally responsible for the harm caused by models in the highest danger category. Risk going to jail works, company fines does not.

- Tiered safety and security standards - Comprehensive requirements that scale with system capability and risk. This is the tricky part, but basically efforts to ensure no system is built that is Autonomous AND General AND Intelligent. Stay away from the center of the AGI death circle (AGI in this case being Autonomous General Intelligence instead of Artificial..)

While I do think the Keep The Future Human proposals are great and should be pursued, they are focused primarily on mitigating upcoming AGI/ASI risks and as outlined earlier the current state of AI has enough negatives to warrant regulation as is.

The missing pieces I see needed for enabling steering both the development and use of AI in a direction that can both positively influence the World Economic Forum Global Risk Report and the UN Suistainable Development Goals are:

- Authentication backed by government approved digital ID.

- United Nations (or other suitable organization) distributed Runtime Compute Tokens for using and training AI.

- Inference provider competition per model, either by regulated licensing or open-source. No single company is allowed AI model exclusivity when releasing to the public. Remember, built upon theft so at the other end is sending company executives to jail.

I envision I at some point will have the time to go more into detail how I think this could technically actually work, but for the sake of getting the ideas suggested I will simply try do short versions.

Authentication - Universal Digital ID

All countries must provide a government sanctioned digital ID for its citizens and all AI inference providers must regularily verify users towards such repositories. No more anonymous use of frontier model AI.

All public facing authenticated systems of certain size (number of users, amount of data, e.t.c.) must also do the same. Including corporate user repositories.

The heavy lifting of handling authentication for transport and session security will still be handled by application providers, but with properties in their user repositories derived from government sanctioned repositories.

All frontier models and applications that fulfill the criteria of requiring government sanctioned digital ID user verification should be registered in a global digital repository. This repository is then either replicated down to national level or contain nation specific properties. The authorization mechanisms from each registered system should be surfaced (perhaps even moved) in the central repository.

Modifications to existing authentication protocols is likely required for this to be possible, but it would enable important things like:

- Global soft kill switch on specific frontier models. Example: a new version of a model might have passed internal evaluations but is in the wild discovered to be possible to get instructions on how to build bioweapon from.

- National soft kill switch on specific frontier models. Example: model passes internal evaluations and internationally agreed upon standardized evaluations, but is discovered to give harmful output when used in some cultures or languages. Evals will never catch everything and language adds dimensions to evaluations.

- Baseline for watermarking. With a shared backend for authentication its properties can be required to be used to determine human origin of AI generated content.

- Authorization consent plane on Global, National and Individual levels. Example: Age restrictions, content restrictions (no ads please, no AI slop please, no bots from unverified users please).

- Universal Digital Wallets. With a platform agnostic shared backend for authentication and authorization consent it will be much easier to manage your data access, and for exemple supercharge AI Agents with increased contextual awareness by granting access independent of where data resides.

- and more..

If you are, like me, familiar with the Microsoft cloud platforms you might notice similarities with how Entra handles Enterprise Applications and Foundry AI models. Yes, this suggestion is heavily inspired by Entra ID. We just need to up a notch and handle things similar at at least national levels. After all, it will provide the tools to enforce Homologation on the walled gardens of Internet.

AI Runtime Compute Tokens

What if we distributed the right to use AI, and make it technically impossible to use without it, to everybody on the planet based on criteria determined by established organizations working for a future we want (United Nations, World Economic Forum, you name it) instead of profit driven corporations. We then allow the trade of such rights so those that does not need or want to use them can sell them. Successfully implemented that would introduce a new steering instrument for shaping the future and provide the fundamentals for some type of universal basic income that many anticipate will eventually be needed. That is the essence of this suggestion, and a full article on how I think this could technically work is warranted, but will try to keep it short for now.

Working names:

- AI Runtime Token (ART) - because providing tools enabling AI regulation is initially the primary focus

- AI Compute Token - because in extension we might need to treat all compute power similarily

- AI Runtime Compute Token (ARCT) - catch it all

What to call it is fairly irrelevant at this moment. Sticking with ARCT for now.

Training and using AI already rely heavily on tokenization and input and output tokens are often used for pricing by inference providers since it fairly well reflects the actual compute, and thus cost, to produce output. Introducing ARCT would not replace that, but it would introduce caps on how many tokens each user can pass through a model per month relative to its size, independently of how many tokens actually purchased from inference provider.

Basic concepts of ARCT:

- Issued monthly using blockchain but total amount set by humans using strict measures

- Multiple types of ARCT: Government, Company, Individual

- Consumed from ledger when passed to AI model

- Distributed to nations, companies and individuals based on criteria set by suitable organization not affiliated with a specific nation. Example criteria for steering global behaviour:

- Reverse GDP per capita, poorer nations get more ARCT per capita.

- Renewable energy mix ratio, power grids with high levels of sustainable energy get more, fossil fuel based gets less.

- Democracy index, no ARCT to dictatorships and more to highly democritized countries.

- Companies receive ARCT based on number of salary receiving employees. This to mitigate the apprentice problem and mass unemployment risks.

- Company type of business. Trying to solve serious world problems (clean energy, cancer) grants more ARCT, being invested in fossil fuel grants less (or why not none please).

- Starting to see a pattern? What direction would you want for humankind and our planet?

- Marketized. By having a blockchain ledger as backend a global exchange for trading unused ARCT can easily be setup, and in the end becoming the only cryptocurrency that matters.

- Expiration date. By having unused ARCT expire, stopping printing new ARCT ends up being possible kill switch.

- Initially can be software based but eventually must be hardware managed (processor architecture changes).

I have yet to disect a transformer and need to learn more about how the LLM neural networks actually work to state this with any level of certainty, but, if the architecture is modified so that neural networks are made ARCT aware I cannot drop the idea that what Anthropic is attempting with their Claude Constitution can be done globally for all models at once. After all, if the neural networks are ARCT aware then ARCT can be seen as AI food and whatever criteria humanity specifies for producing ARCT will be a north star for the models to follow.

The EU angle

Can this really be done? Technically I believe there are harder problems remaining to actually achieve AGI then what is needed to get this working. Politically is another matter.

Most nations are not in the AI race and I imagine naively they will encourage that tools are introduced that allow homologation of AI. China and USA are in the race and will probably object to any regulation attempted to be applied on them from outside their countries. China however might very well agree if ARCT distribution criteria is considered favourable (many citizens, making efforts in renewable energy, albeit poor on democracy indexes and pollution in general) for them. Getting USA onboard will be the hardest part. Surprise surprise.

Luckily, not all of it has to be implemented (or invented) at once and on all markets. The European Union is in a unique position to start. Perhaps together with Australia, Canada and the United Kingdom due to current geopolitical reasons. The rest of the world will then follow willingly or because having no other choice.

- By end of 2026 all EU member states will comply with the European Digital Identity Framework and offer digital wallets to its citizens compliant with the EU Digital Identity Wallet. While I see pieces missing in the European Digital ID (EDID) to achieve what I want, this is a great start and tremendously important to have official digital citizen verification for such a big market.

- Make EDID mandatory for user verification of EU citizens on all systems of certain size. Block from European market if not compliant. Push aggressively for this during 2027 (or as soon as all EU member states have EDID implemented) because apparently current world order does mix and match on seemingly unrelated political issues for leverage. Implementation time frames can then be negotiable.

- Regulate knowingly the EDID information is available. Examples are age restrictions and content restrictions.

- Prioritize and fund EU project for ARCT specifications and next generation EDID. One software based to begin with and one hardware based to end with.

- Implement and enforce ARCT on EU level. Be generous with ARCT distribution as at this point its purpose is to set the practice and not put AI usage restrictions on EU citizens.

- Play the ASML card.

- Hand it all over to the United Nations for global governance.

Wait, what is the ASML card? Only a select few companies have the foundries to create the advanced microprocessors realisticly needed to train and do inference for AI. TSMC, Samsung, Intel and perhaps GlobalFoundries. What they all share in common is that they all need equipment from Dutch company ASML to mass produce. Every time a new microprocessor plant is setup or a new chip design for increased transistor density is introduced, ASML is what makes it possible.

That is some serious leverage possibilities. At any given time the EU can proclaim, and even more so the Netherlands, from now on this has to be adhered to (chip design following ARCT specification) or we stop ASML from doing business with you. I am almost baffled this card has not been played already (perhaps it has but the public is unaware) for other political reasons.

There you have it. My brain dump recipe for a brighter future.